#include <stdlib.h>

#include <stdio.h>

int main()

{

return 0;

}

I used NO graphics libraries or any outside code other than the standard C/C++ stuff in Visual Studio Express 2005. I decided to output to Windows BMP files since the format is braindead-simple.

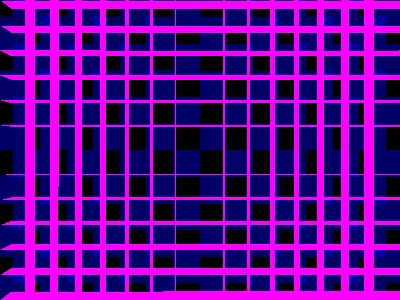

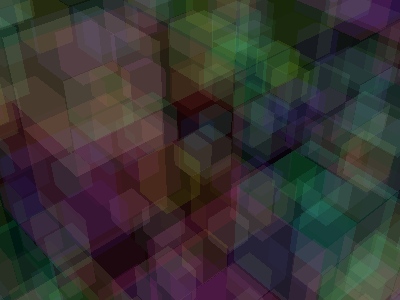

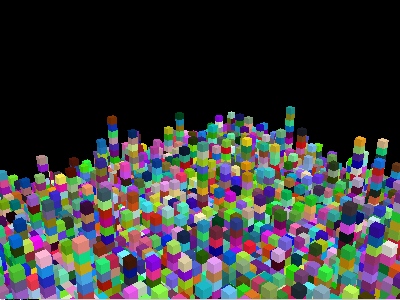

First image I got to render, at about 10:00 PM. For simplicity I decided the world would be a 16x16x16 matrix of either colored or invisible blocks. I initialized the world with a checkerboard of solid blue blocks on the far wall. The goal was to render the scene and look for any checkerboard pattern to see if it was working. The pink pixels are the ones that failed to render due to some sort of bug. Rather than die when the raytrace() function has a problem, it just returns pink. |

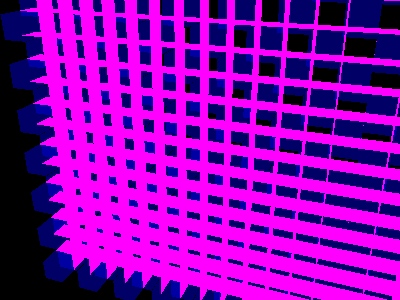

I moved the camera to see if it would change the perspecitive. It did! A good sign that things were really rendering in 3D. |

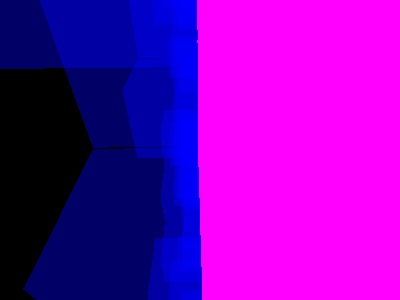

Then I put the camera in the blocks. As you can see, they are partially transparent. I think they were about 15% solid. The pink on the right is where rays exited the 16x16x16 world and had some sort of failure. |

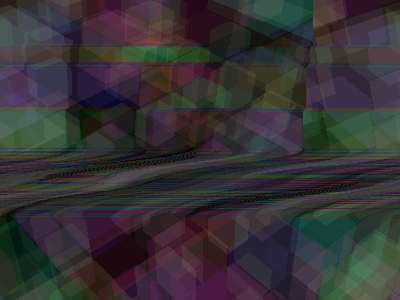

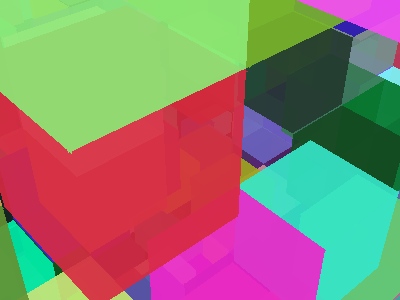

I threw out the checkerboard and randomly placed random-colored blocks throughout the world. I forgot to use binary mode when writing the BMP file which led to the distortion. |

Fixed the binary mode glitch. |

I made the blocks less transparent. Ok, now there's too many blocks. |

...So I set the random generation to make less blocks. |

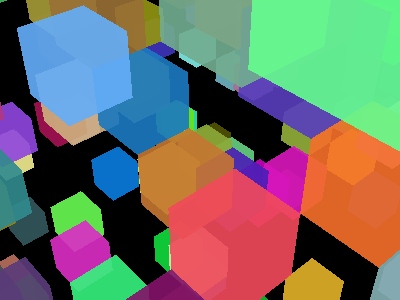

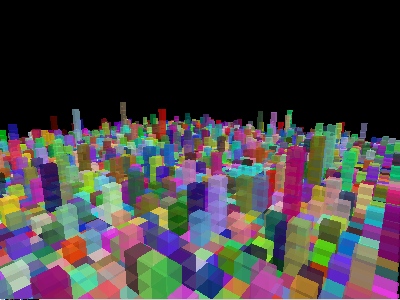

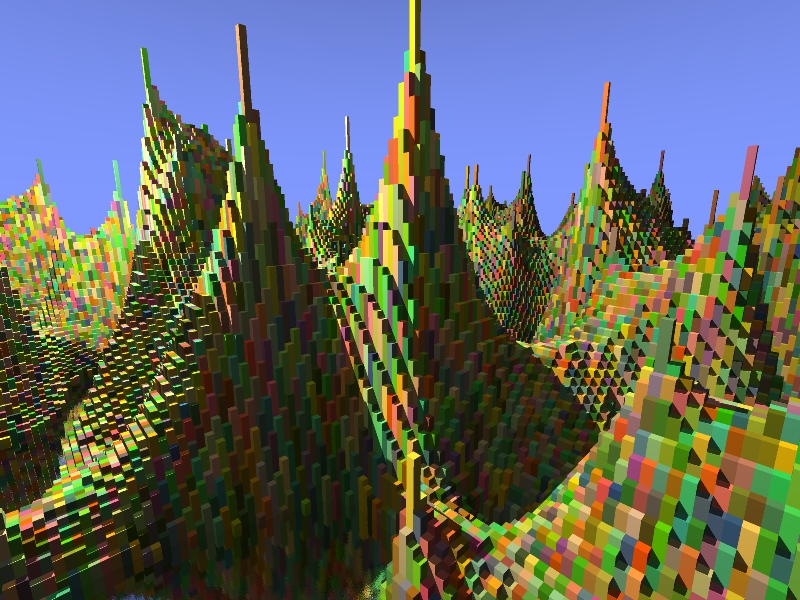

Instead of completely randomly placed blocks, I decided to place a floor layer filled with blocks, and then randomly stack blocks on top here and there. The goal was to create a city-scape kind of environment. I also made it so when the ray hits different sides of the cubes, it grabs a slightly different brightness value. |

Here I increased the size of the world from 16x16x16 to 64x64x64. |

Making sure the transparency still works. The cool thing is this didn't really slow down the render that much. Only by about 5%! |

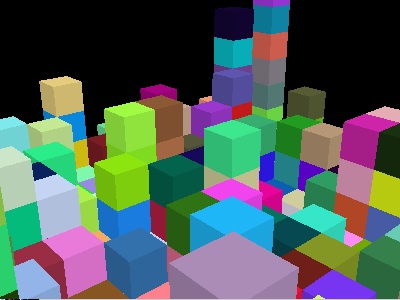

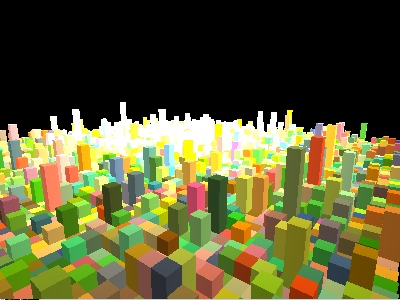

I added a light source. The farther away it is, the less light hits each pixel by a factor of the inverse distance squared. Just like real life! |

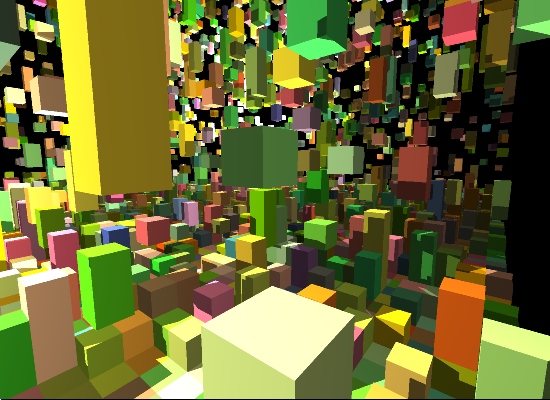

Now we're getting somewhere! When a ray from the screen hits a solid block, the code computes a new ray from the hit point to the light source. If this ray doesn't hit an object, we have light! Otherwise, shadow. The result is pixel-perfect lighting and shadows. I added tons of random blocks to the "sky" to make the effect more dramatic. |

Added a second light source and took another pic. |

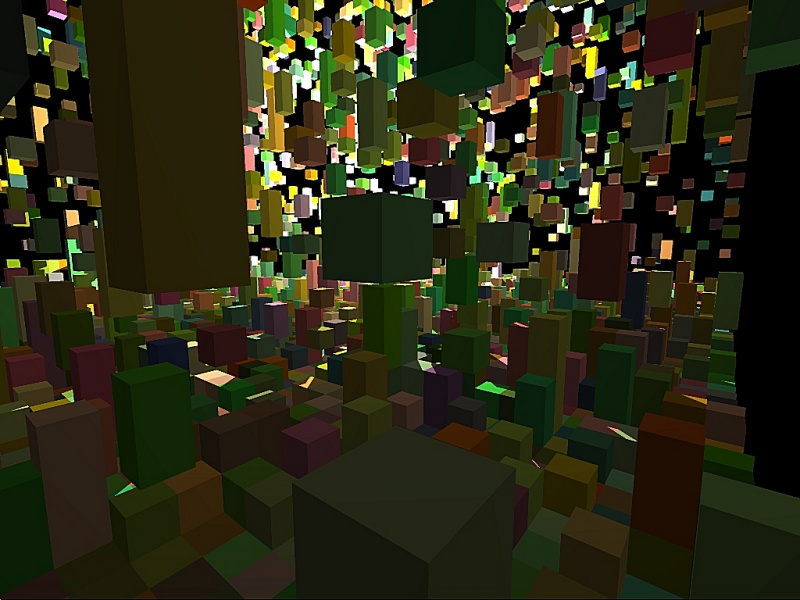

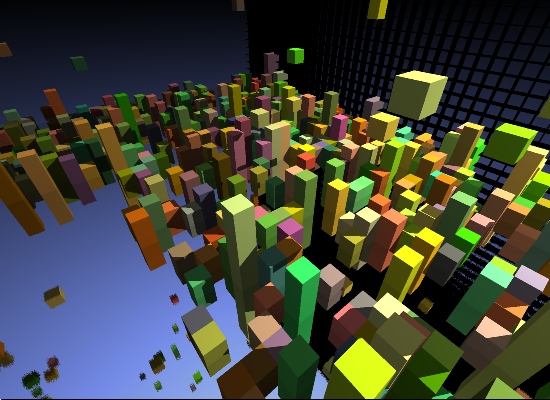

I got sick of that black background so I tried to add a blue sky. The steepness at which a ray escapes the world determines the brightness it returns. I got it backwards so the sky is upside-down. :/ More importantly, I made the lowest layer of blocks reflective! When a ray hits one, it bouces off and keeps searching for something else to collide with. This was quite simple to do. Also those "pink" error pixels are back except now they are black. They were probably there all along, hiding in the dark... :O |

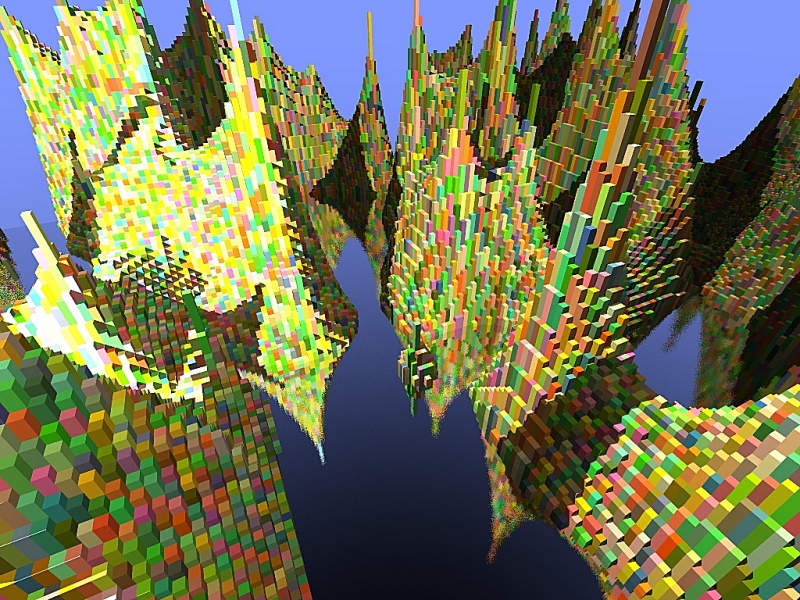

A real good shot of the pixel-perfect shadowing. If a ray can make it to a colored block and jump of directly to the light source without any other collisions, you get a lit pixel. It's that simple! The "terrain" is generated with a pretty basic algorithm of picking completely random heights for a few seed points, then smoothing between them. Finally fixed the error pixels. When a ray exited the world into -x or -y space, my code didn't make sense and was causing an infinite loop. Well infinite except for my emergency if(k>1000) break; statement. |

A shot of the edge of the world. You can also see the terrain reflected in the lowest layer of blocks. |

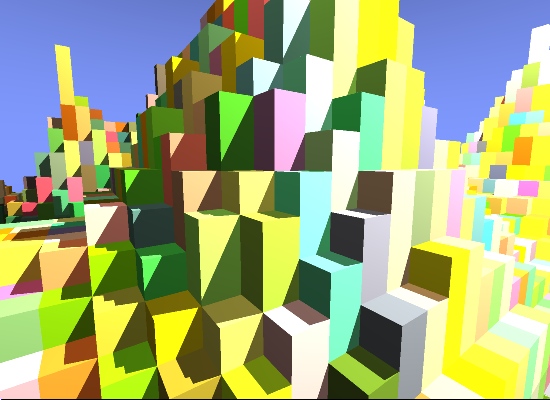

Increased the size of the world again. And then fell asleep. It was about 5:00 AM. :D |

Added some intentional inaccuracy when a ray bounces off a reflective surface. This scattering causes the surface to look textured, or like waves of really reflective water. |

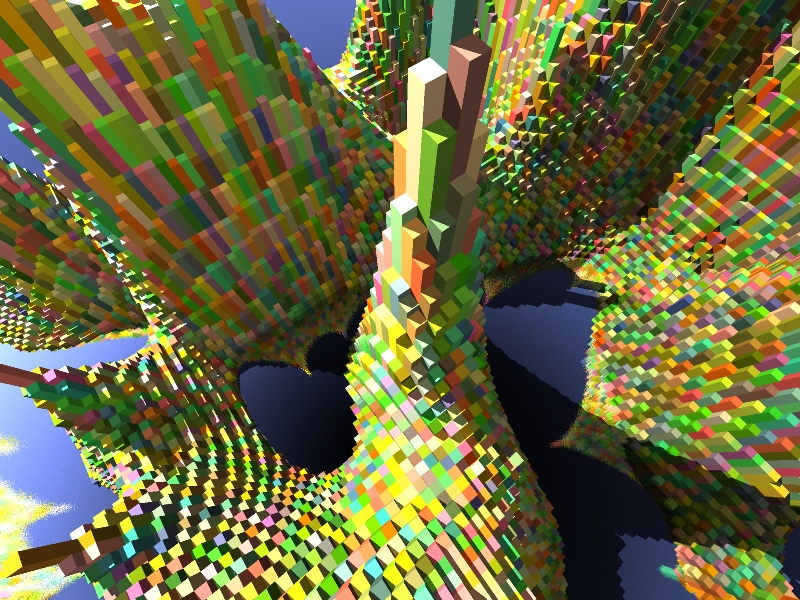

Added shadows to reflective surfaces. This was very easy, all I had to do was do the lighting calculation even when the ray bounces. Really just a re-ordering of some lines of code. |

I set a square region in the middle of the world to a flat 30 blocks tall and made them all reflective. I wanted to try reflection on more than just level surfaces. |

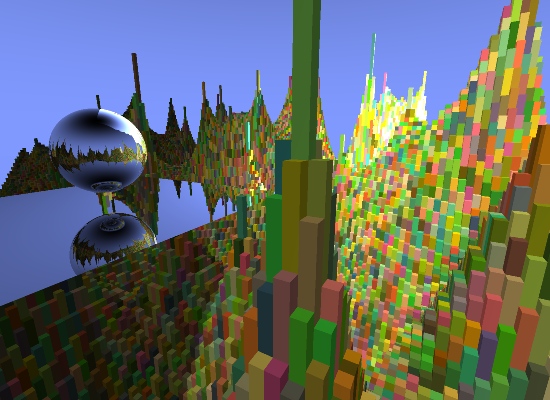

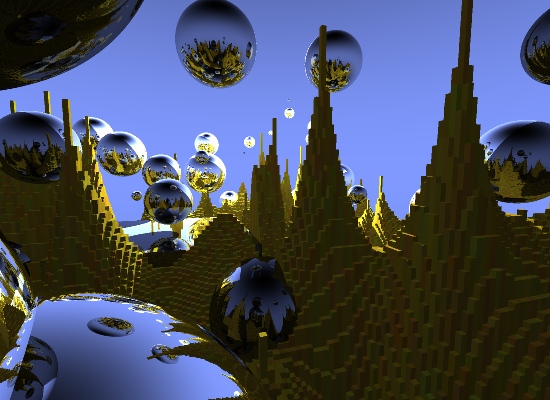

Whoa look at that! As cool as this looks it really wasn't too tough with ray-tracing! The sphere is just a center point and a radius. Each time the code shoots a ray into the scene, it calculates the distance to the first intersection point with the sphere. This is just a matter of solving for the roots of the quadratic equation. There are always 0, 1, or 2 points where a ray will hit a sphere, either missing, colliding tangentially or entry and exit wounds. Once we have the hit point on the sphere, the normal on the surface is just the radius vector, so we can easily bounce, and get a new ray! The rest is already taken care of by the old reflection code. Lighting for the hit point on the sphere is already handled by the old lit-test code, too. |

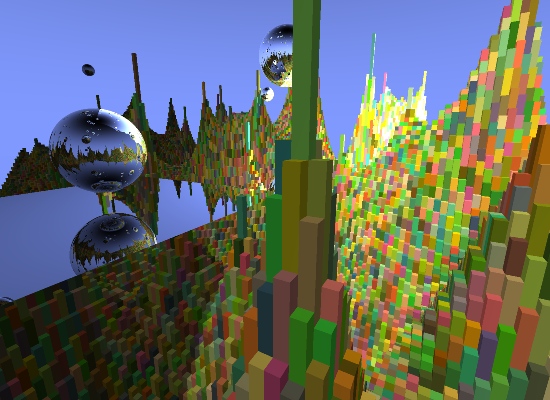

Added 100 more spheres, but most of them are way up in the sky. :/ (You can see 'em in the reflections.) All these spheres barely slow down the render, by just 10-15%! Using bounding boxes we can avoid testing most spheres most of the time. Since most rays don't reflect, and the ones that do are unlikely to do it again, I allowed up to 100 bounces per ray shot into the scene, without much worry. |

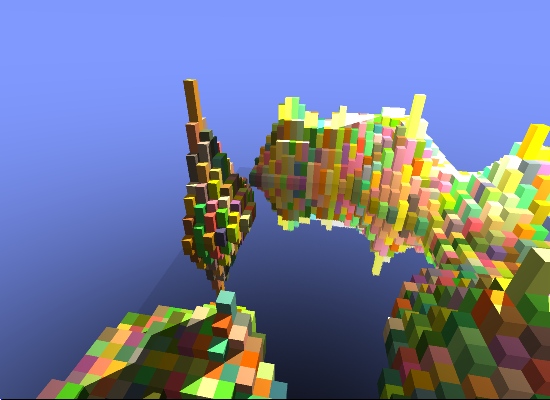

Capped the max height of randomly placed spheres to try and see how they intersect with the terrain and stuff. |

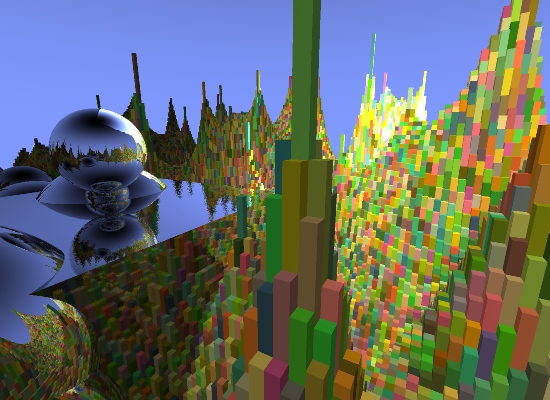

Turned the camera to the left a little. (Actually I moved the target to just below that original sphere.) |

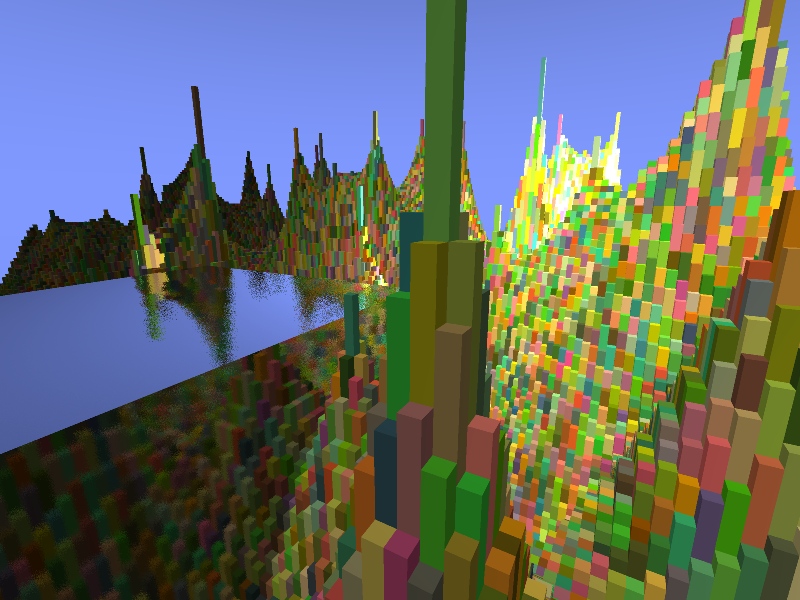

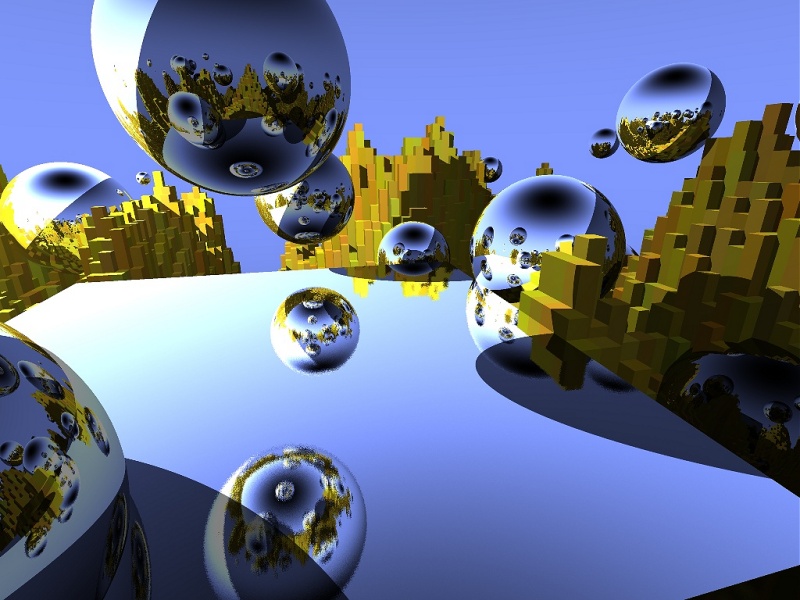

I decided to make the terrain colors less random and more yellow. I lost the random generation seed for this shot or I'd render a high-res one. :( |

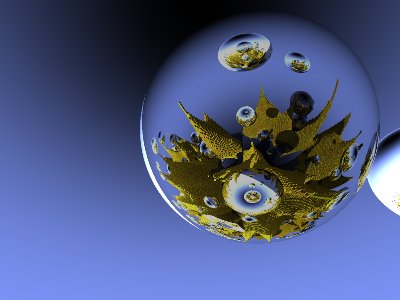

Looking up at the underside of a high-in-the-sky sphere. There is a potential for a ray to bounce infinitely between spheres but this is unlikely. You have to give up after some number of bounces. |

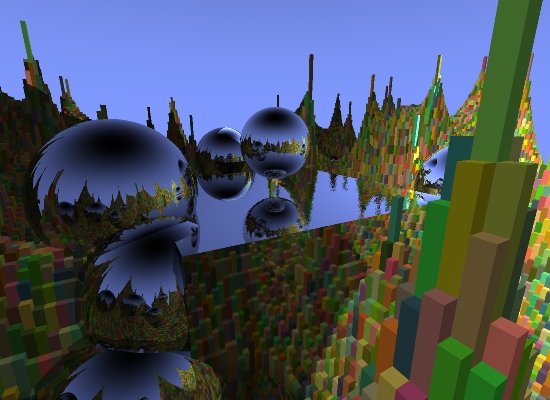

This was the first shot I used for my wallpaper. Nothing new here really but I did mess up the terrain generation. After this shot I took some mystery pills for my tooth ache and I don't remember what all happened but that was the end of coding for the day! |

Video Clips!These were created by rendering a sequence of still images and stringing them together in an MPG with VideoMach. Each frame I moved the camera a little and the spheres slide up, down or stay still based on their array index modulo 3. Low-Res Video Clip High-Res Video Clip |

Source CodeHere's the source code at this point if you're into that sorta thing. Please remember that I wrote this over a weekend for the hell of it so the code is a bit... fresh. Now Windows and *nix compatible! VS2005 Project files and g++ Makefile are included. PixelMachine Source Code |

| <-- Help out by sharing this article with others! |